"Enhanced Visual Inference and Chinese Comprehension with Qwen-VL-Max Model"

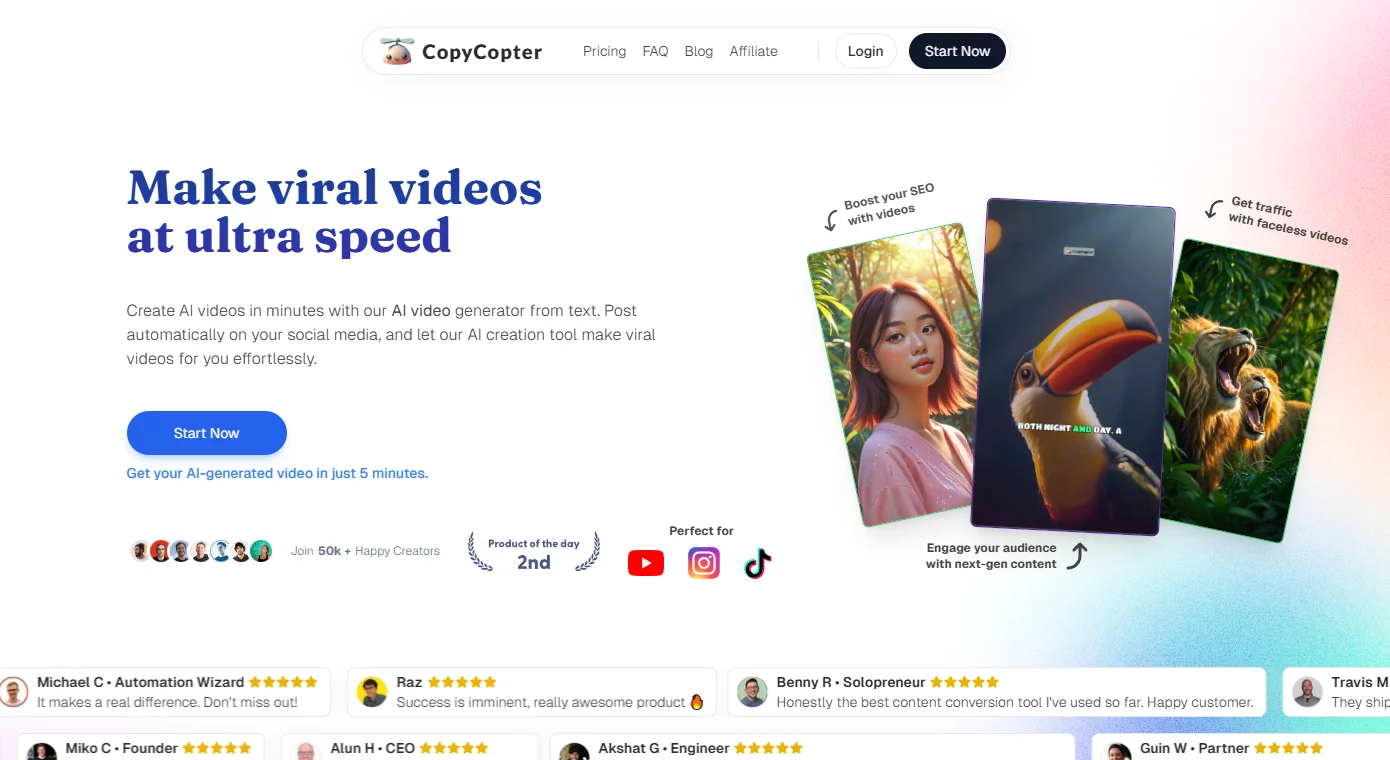

Recently, Tongyi Qianwen upgraded its visual understanding model Qwen-VL and released the Max version after the Plus version. This upgraded model has significantly improved its visual reasoning and Chinese comprehension abilities, providing users with more powerful functions such as image recognition, answering questions, creating, and coding. In multiple authoritative evaluations, Qwen-VL-Max has performed exceptionally well, with overall performance comparable to GPT-4V and Gemini Ultra.

In terms of basic capabilities, Qwen-VL-Max can accurately describe and recognize image information, and perform information reasoning and creative expansion based on images. In addition, the model also has visual localization capabilities, allowing it to answer questions about specific areas of the image.

Qwen-VL-Max has shown outstanding performance in visual reasoning. It can understand complex forms of images such as flowcharts, analyze complex icons, and can answer questions, write essays, and code based on images. These features enable Qwen-VL-Max to handle multimodal data more efficiently and accurately.

In terms of image-text processing, Qwen-VL-Max's ability to recognize Chinese and English text has been significantly improved. It supports high-resolution images with over a million pixels and images with extreme aspect ratios, and can reproduce dense text and extract information from tables and documents. This functionality is very valuable for practical applications such as document processing and report analysis.

As multimodality becomes the next hot topic in the field of large models, vision has become the most important modality in multimodal capabilities. Tongyi Qianwen's visual language model is developed based on Tongyi Qianwen LLM, aligning the visual representation learning model with LLM to endow AI with the ability to understand visual information. This opens a "window" of vision in the "mind" of large language models, enabling them to better understand and process visual information.

Compared to LLM, multimodal large models have greater application potential. For example, researchers are exploring how to combine multimodal large models with autonomous driving scenarios to find new technological paths for "fully autonomous driving". In addition, deploying multimodal models to edge devices such as smartphones, robots, and smart speakers to enable intelligent devices to automatically understand information in the physical world is also a promising application. Furthermore, developing applications based on multimodal models to assist the daily lives of visually impaired individuals is of great significance.

To allow users to better experience the capabilities of Qwen-VL-Max, Tongyi Qianwen has temporarily opened the Max version model for free. Users can directly experience the capabilities of the Max version model on the Tongyi Qianwen official website and app, or call the model API through the Alibaba Cloud Lingji platform (DashScope).

Overall, the Qwen-VL-Max visual understanding model launched by Tongyi Qianwen further enhances AI's capabilities in visual reasoning and Chinese comprehension. With the continuous development of multimodal large models, we have reason to believe that AI will demonstrate powerful application potential in more fields.